Data ScienceGeneral

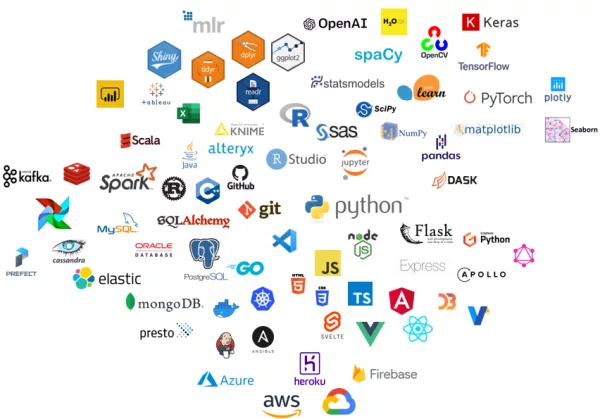

Tools and Technologies in Data Science

Data Science is a dynamic and interdisciplinary field that harnesses a diverse set of tools and technologies to extract valuable insights from data.

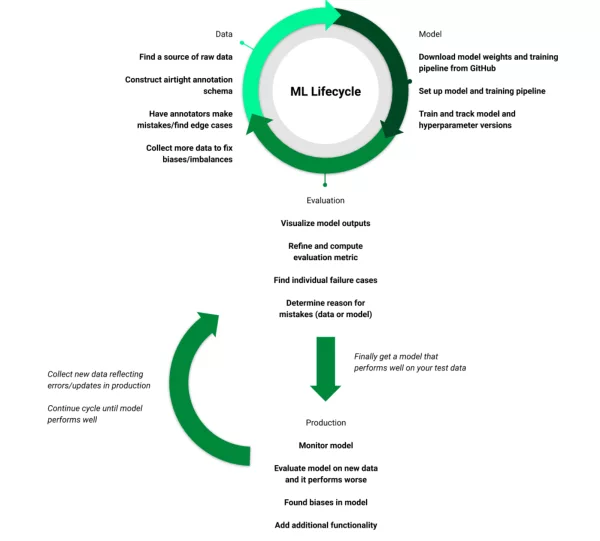

From data collection to model deployment, various tools and platforms play a crucial role in the data science lifecycle. Let’s explore the key categories of tools and technologies that data scientists rely on:

Data Collection and Storage:

- Databases: Databases are structured repositories for storing and managing data. Relational databases (e.g., MySQL, PostgreSQL) are suitable for structured data, while NoSQL databases (e.g., MongoDB, Cassandra) handle unstructured and semi-structured data. NewSQL databases (e.g., Google Spanner) combine the benefits of both.

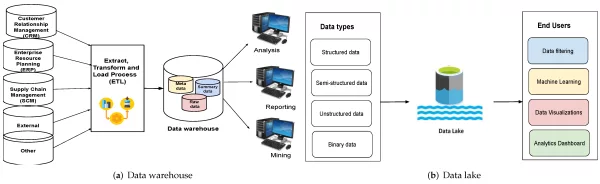

- Data Warehousing: Data warehousing solutions like Amazon Redshift, Google BigQuery, and Snowflake offer scalable and performant platforms for storing and querying large datasets. They allow data scientists to analyze data efficiently and derive insights quickly.

- Data Lakes: Data lakes store vast amounts of raw and processed data, often in its original format. Platforms like Amazon S3, Hadoop HDFS, and Azure Data Lake Storage facilitate data storage, enabling data scientists to access and process data from various sources.

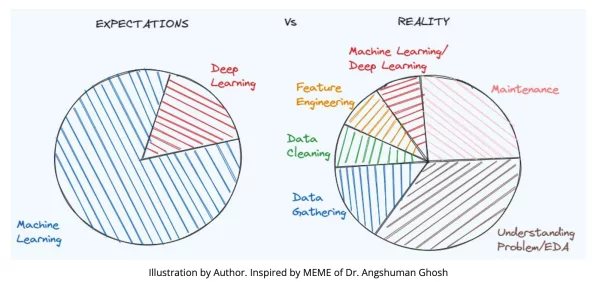

Data Cleaning and Preprocessing:

- Python Libraries: Pandas is a powerful library for data manipulation and analysis. It provides data structures and functions for efficiently cleaning, transforming, and exploring data. NumPy is used for numerical computations and mathematical operations.

- Data Wrangling Tools: OpenRefine, Trifacta, and Alteryx help data scientists clean and transform data through user-friendly interfaces. They assist in tasks like handling missing values, correcting inconsistencies, and merging datasets.

- Data Visualization Tools: Tools like Tableau, Power BI, and matplotlib allow data scientists to create visually appealing graphs, charts, and dashboards. These tools aid in exploratory data analysis and help communicate insights effectively.

Why Data Cleaning is Important & What is Data Cleaning Methods?

Exploratory Data Analysis (EDA) and Visualization:

- Python Libraries: Seaborn, Plotly, and Bokeh provide a range of options for data visualization. Seaborn offers high-level interfaces for creating informative statistical graphics, while Plotly and Bokeh allow for interactive visualizations.

- R: RStudio is a popular integrated development environment (IDE) for R, a programming language for statistical computing and graphics. The ggplot2 package in R is widely used for creating elegant and customizable visualizations.

Feature Engineering:

- Python Libraries: Scikit-learn and Featuretools help create and transform features to enhance model performance. Good feature engineering can lead to models that are more accurate, faster to train, and more robust. By selecting or creating features that capture the underlying patterns and relationships in the data, the model’s ability to generalize from the training data to new, unseen data is improved.

Examples of Feature Engineering:

- Text Data: In natural language processing (NLP), feature engineering might involve transforming text into numerical representations using techniques like TF-IDF (Term Frequency-Inverse Document Frequency) or word embeddings.

- Time-Series Data: For time-series data, features like moving averages, time lags, and seasonality indicators can provide valuable insights to models predicting future values.

- Image Data: In computer vision, features might be extracted from images using techniques like edge detection, color histograms, or convolutional neural networks (CNNs).

Machine Learning and AI:

- Machine Learning Libraries: Scikit-learn provides a wide range of machine learning algorithms for classification, regression, clustering, and more. TensorFlow and PyTorch are deep learning frameworks used for building and training neural networks.

- AutoML Platforms: Google AutoML and H2O.ai’s AutoML are platforms that automate the process of selecting and tuning machine learning models, simplifying the model development process.

- Natural Language Processing (NLP): NLTK and spaCy are Python libraries for natural language processing tasks, such as tokenization, part-of-speech tagging, and named entity recognition. BERT and GPT models are state-of-the-art models for language understanding and generation.

- Computer Vision: OpenCV is a widely used computer vision library that offers tools for image and video analysis. TensorFlow’s Object Detection API enables the detection and localization of objects in images and videos.

Difference Between Artificial Intelligence, Machine Learning and Deep Learning

Model Deployment and Integration:

- Web Frameworks: Flask and Django are popular Python web frameworks used for deploying machine learning models as APIs or web applications. They enable seamless integration of models into production environments.

- Containerization: Docker provides a platform for packaging applications and their dependencies into containers, ensuring consistency between development and production environments. Kubernetes manages containerized applications at scale.

- Cloud Services: Cloud platforms like AWS, Azure, and Google Cloud offer services for deploying, hosting, and scaling machine learning models. AWS Lambda, Azure Functions, and Google Cloud Functions allow serverless execution of code.

Version Control and Collaboration:

- Version Control Systems: Git is a distributed version control system used for tracking changes in code. Platforms like GitHub and GitLab provide repositories for collaborative development and code sharing.

- Project Management: Tools like Jira, Trello, and Asana assist data science teams in tracking tasks, managing workflows, and coordinating projects.

Big Data and Distributed Computing:

- Hadoop Ecosystem: Hadoop is an open-source framework for distributed storage and processing of large datasets. Apache Spark enhances Hadoop by providing faster in-memory data processing and a wide range of APIs for various tasks.

- Streaming Platforms: Apache Kafka and Amazon Kinesis allow real-time data streaming and processing, enabling organizations to analyze and react to data as it’s generated.

Most Popular Big Data Analytics Tools

Data Ethics and Privacy:

- Data Governance: Tools like Collibra and Informatica help organizations establish data governance practices, ensuring data compliance, quality, and security.

Continuous Learning and Skill Development:

- Online Courses: Platforms like Coursera, Udacity, and edX offer a plethora of data science courses and specializations, covering a wide range of topics from beginner to advanced levels.

- Blogs and Forums: Websites like Towards Data Science and Stack Overflow provide a platform for data scientists to learn from community discussions, ask questions, and share knowledge.

Data Storytelling and Communication:

- Data Visualization Tools: Flourish and Datawrapper offer tools for creating interactive and engaging data visualizations, enhancing the ability to communicate insights effectively.

- Presentation Software: Tools like PowerPoint and Google Slides assist in crafting compelling presentations to convey findings and recommendations to non-technical stakeholders.